This is your brain on chips.

Computer chips have followed Moore’s Law since Intel founder Gordon Moore came up with it in 1965: Every two years, the number of transistors engineers can place on a circuit board has roughly doubled, and so has processing speed. But now, chip-makers are running into a wall—physics—and soon (perhaps as soon as 2015) miniaturization will no longer increase computing power.

To defeat that trend, an international team of IBM researchers has announced the development of a new kind of cognitive chip that doesn’t need software: instead, it is modeled on the human brain’s ability to learn, reorganizing itself the same way that neurons do.

Hearing the news, nerds were quick to crack jokes about “Skynet,” the malevolent artificial intelligence from the Terminator movies, or recall The Matrix, Dune, or a hundred other science fiction sagas that rely on the conceit that when computers start learning, humans find they've opened up a Pandora's box of troubles.

Nerves aren’t calmed by the news that some funding for the project comes from the Defense Advanced Research Project Agency, the Pentagon think tank that tends to be the subject of conspiracy theories but has produced such inventions as the internet and GPS.

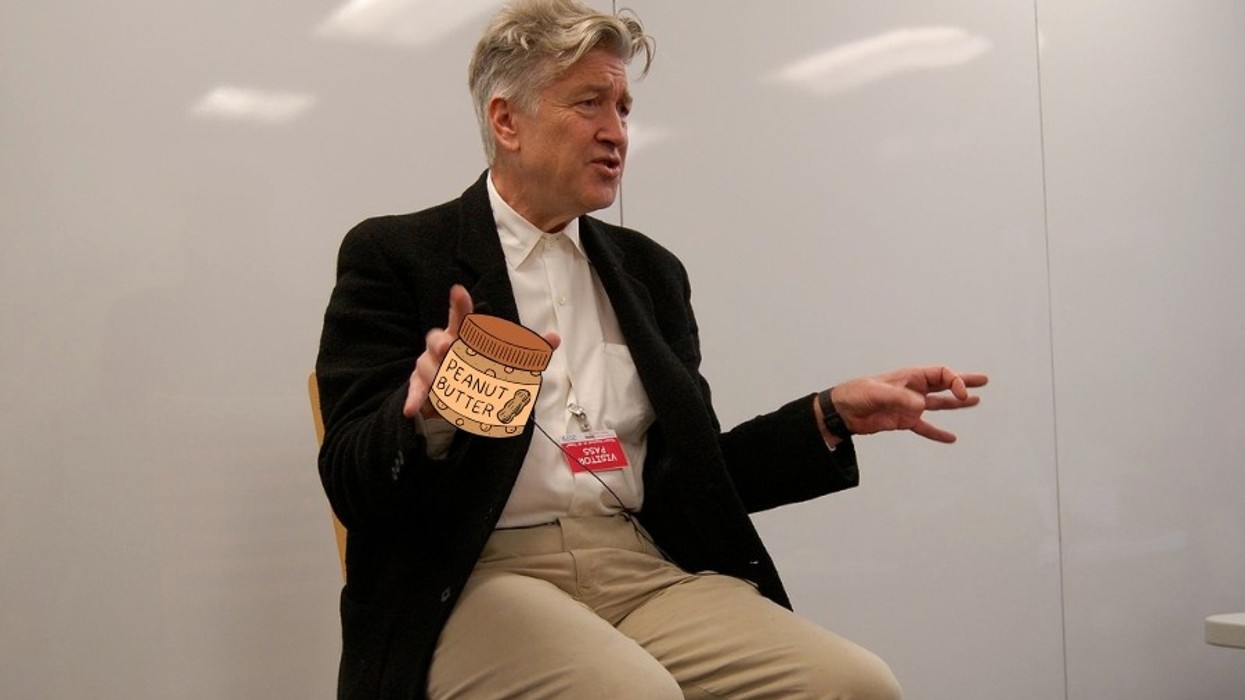

“There’s certainly nothing to fear here; we’re talking about very, very simple capabilities,” Dr. Chris Kello, a cognitive scientist at the University of California, Merced, who is working on the project. He’s a little miffed that I’ve asked. “Nobody talks about Terminator every time you pick up a phone and talk to a computer when you’re booking a flight.”

On the other hand, those voice recognition computers function so poorly they can’t possibly be a threat, unless they’re just smart enough to maliciously enjoy hoarse, angry people screaming “existing reservation!” again and again.

Regardless, Kello explains that the key to the next generation of computers is creating a processor that handles data en masse with “lots of very small neuron-like assessing units [that] coordinate to solve problems,” rather than one big processor processing data in order.

“If you think about how amazing our visual capabilities are, the ability to take a large amount of data coming in […] that data is very noisy, and represents a very complex world,” he says. “For the human visual system to be able to put together the pieces of the world through this noisy is array is something that current computers really can’t touch.”

Mello’s job is to help test and teach the chip how to learn by applying what we know about how the human brain gathers information and adapts to it. At Merced, they’ve rendered a model of their campus that virtual agents will be asked explore.

Is this where androids dream of electric sheep?

“The tests are much what you might imagine testing animal intelligence, or human intelligence, for that matter, “ he says. “You put organisms in an environment and give them tasks and see how they do.”

Organisms? IBM’s press release notes the chips contain no biological materials, and they don’t take advantage of nanotechnology, either, a frequently-cited path toward brain-like computers. But, Kello says, “in all of those cases you’re dealing with systems where uncertainty is the rule rather than the exception. “

The ability to handle uncertainty and interpret massive amounts of data at the same time will make cognitive computers far more effective at challenges that involve interacting with the real world, from monitoring busy intersections to collecting tsunami warning data.

Right now, the researchers are only at phase two of the project, testing and refining the chips with neuron levels of 10 to the sixth power. Only in phase four, according to a schedule laid out by DARPA, does the team imagine building a robot that contains chips of 10 to the eighth power, just below the 10 to the 10th power neuron density in the human brain. At that point, we at GOOD will happily welcome our new robot overlords.

Or just share in the advantages of a major 21st-century innovation. We’ll see how it turns out.

Photos courtesy IBM, U.C. Merced.