Justice Ruth Bader Ginsburg died on Friday, the Supreme Court announced.

Chief Justice John Roberts announced in a statement that “Our nation has lost a jurist of historic stature.”

Even before her appointment, she had reshaped American law. When he nominated Ginsburg to the Supreme Court, President Bill Clinton compared her legal work on behalf of women to the epochal work of Thurgood Marshall on behalf of African-Americans.

The comparison was entirely appropriate: As Marshall oversaw the legal strategy that culminated in Brown v. Board of Education, the 1954 case that outlawed segregated schools, Ginsburg coordinated a similar effort against sex discrimination.

Decades before she joined the court, Ginsburg’s work as an attorney in the 1970s fundamentally changed the Supreme Court’s approach to women’s rights, and the modern skepticism about sex-based policies stems in no small way from her lawyering. Ginsburg’s work helped to change the way we all think about women – and men, for that matter.

I’m a legal scholar who studies social reform movements and I served as a law clerk to Ginsburg when she was an appeals court judge. In my opinion – as remarkable as Marshall’s work on behalf of African-Americans was – in some ways Ginsburg faced more daunting prospects when she started.

Starting at zero

When Marshall began challenging segregation in the 1930s, the Supreme Court had rejected some forms of racial discrimination even though it had upheld segregation.

When Ginsburg started her work in the 1960s, the Supreme Court had never invalidated any type of sex-based rule. Worse, it had rejected every challenge to laws that treated women worse than men.

For instance, in 1873, the court allowed Illinois authorities to ban Myra Bradwell from becoming a lawyer because she was a woman. Justice Joseph P. Bradley, widely viewed as a progressive, wrote that women were too fragile to be lawyers: “The paramount destiny and mission of woman are to fulfil the noble and benign offices of wife and mother. This is the law of the Creator.”

And in 1908, the court upheld an Oregon law that limited the number of hours that women – but not men – could work. The opinion relied heavily on a famous brief submitted by Louis Brandeis to support the notion that women needed protection to avoid harming their reproductive function.

As late as 1961, the court upheld a Florida law that for all practical purposes kept women from serving on juries because they were “the center of the home and family life” and therefore need not incur the burden of jury service.

Challenging paternalistic notions

Ginsburg followed Marshall’s approach to promote women’s rights – despite some important differences between segregation and gender discrimination.

Segregation rested on the racist notion that blacks were less than fully human and deserved to be treated like animals. Gender discrimination reflected paternalistic notions of female frailty. Those notions placed women on a pedestal – but also denied them opportunities.

Either way, though, blacks and women got the short end of the stick.

Ginsburg started with a seemingly inconsequential case. Reed v. Reed challenged an Idaho law requiring probate courts to appoint men to administer estates, even if there were a qualified woman who could perform that task.

Sally and Cecil Reed, the long-divorced parents of a teenage son who committed suicide while in his father’s custody, both applied to administer the boy’s tiny estate.

The probate judge appointed the father as required by state law. Sally Reed appealed the case all the way to the Supreme Court.

Ginsburg did not argue the case, but wrote the brief that persuaded a unanimous court in 1971 to invalidate the state’s preference for males. As the court’s decision stated, that preference was “the very kind of arbitrary legislative choice forbidden by the Equal Protection Clause of the 14th Amendment.”

Two years later, Ginsburg won in her first appearance before the Supreme Court. She appeared on behalf of Air Force Lt. Sharron Frontiero. Frontiero was required by federal law to prove that her husband, Joseph, was dependent on her for at least half his economic support in order to qualify for housing, medical and dental benefits.

If Joseph Frontiero had been the soldier, the couple would have automatically qualified for those benefits. Ginsburg argued that sex-based classifications such as the one Sharron Frontiero challenged should be treated the same as the now-discredited race-based policies.

By an 8–1 vote, the court in Frontiero v. Richardson agreed that this sex-based rule was unconstitutional. But the justices could not agree on the legal test to use for evaluating the constitutionality of sex-based policies.

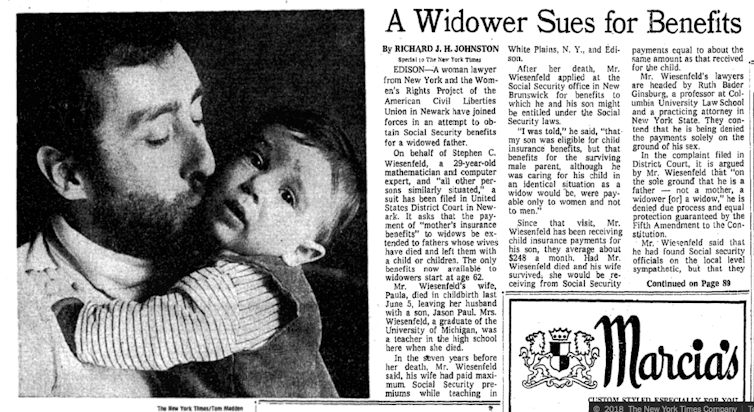

New York Times article about the Wiesenfeld case, which refers to Ginsburg as ‘a woman lawyer.’ New York Times

New York Times article about the Wiesenfeld case, which refers to Ginsburg as ‘a woman lawyer.’ New York Times

Strategy: Represent men

In 1974, Ginsburg suffered her only loss in the Supreme Court, in a case that she entered at the last minute.

Mel Kahn, a Florida widower, asked for the property tax exemption that state law allowed only to widows. The Florida courts ruled against him.

Ginsburg, working with the national ACLU, stepped in after the local affiliate brought the case to the Supreme Court. But a closely divided court upheld the exemption as compensation for women who had suffered economic discrimination over the years.

Despite the unfavorable result, the Kahn case showed an important aspect of Ginsburg’s approach: her willingness to work on behalf of men challenging gender discrimination. She reasoned that rigid attitudes about sex roles could harm everyone and that the all-male Supreme Court might more easily get the point in cases involving male plaintiffs.

She turned out to be correct, just not in the Kahn case.

Ginsburg represented widower Stephen Wiesenfeld in challenging a Social Security Act provision that provided parental benefits only to widows with minor children.

Wiesenfeld’s wife had died in childbirth, so he was denied benefits even though he faced all of the challenges of single parenthood that a mother would have faced. The Supreme Court gave Wiesenfeld and Ginsburg a win in 1975, unanimously ruling that sex-based distinction unconstitutional.

And two years later, Ginsburg successfully represented Leon Goldfarb in his challenge to another sex-based provision of the Social Security Act: Widows automatically received survivor’s benefits on the death of their husbands. But widowers could receive such benefits only if the men could prove that they were financially dependent on their wives’ earnings.

Ginsburg also wrote an influential brief in Craig v. Boren, the 1976 case that established the current standard for evaluating the constitutionality of sex-based laws.

Ginsburg at the 2015 State of the Union address. Reuters/Joshua Roberts

Ginsburg at the 2015 State of the Union address. Reuters/Joshua Roberts

Like Wiesenfeld and Goldfarb, the challengers in the Craig case were men. Their claim seemed trivial: They objected to an Oklahoma law that allowed women to buy low-alcohol beer at age 18 but required men to be 21 to buy the same product.

But this deceptively simple case illustrated the vices of sex stereotypes: Aggressive men (and boys) drink and drive, women (and girls) are demure passengers. And those stereotypes affected everyone’s behavior, including the enforcement decisions of police officers.

Under the standard delineated by the justices in the Boren case, such a law can be justified only if it is substantially related to an important governmental interest.

Among the few laws that satisfied this test was a California law that punished sex with an underage female but not with an underage male as a way to reduce the risk of teen pregnancy.

These are only some of the Supreme Court cases in which Ginsburg played a prominent part as a lawyer. She handled many lower-court cases as well. She had plenty of help along the way, but everyone recognized her as the key strategist.

In the century before Ginsburg won the Reed case, the Supreme Court never met a gender classification that it didn’t like. Since then, sex-based policies usually have been struck down.

I believe President Clinton was absolutely right in comparing Ruth Bader Ginsburg’s efforts to those of Thurgood Marshall, and in appointing her to the Supreme Court.

This article originally appeared on The Conversation. You can read it here.